What if ChatGPT Was Just the Beginning of a New Era of Deflation?

Everyone has been so worried about inflation, but what if deflation was just around the corner?

What if, instead of trying to stop deflation at all costs, we embrace it? Deflation becomes something celebrated because it means that we are getting more for less.

Jeff Booth

After launching in December, OpenAI’s ChatGPT has taken over the world by storm. It reached one million users after just five days, and now it has reached 100 million users in less than two months. There is nothing that comes even remotely close to the meteoric rise of ChatGPT in the history of new technologies. If you have a white-collar job and you are not yet using ChatGPT, you are doing something wrong.

After its multibillion-dollar investment in OpenAI a few weeks ago, Microsoft is already rolling out a version of its popular videoconferencing app Teams powered by ChatGPT.

Last week, ChatGPT got honorable scores at medical, legal, and business exams. This week, while using ChatGPT in the background, Glass AI created a user interface to generate a differential medical diagnosis and treatment after the user enters a description of the patient and its symptoms. Click on the tweet below to see a demo or go to Glass AI to play with it.

One industry that is already being disrupted by AI is education. It is so easy to have ChatGPT do one’s homework and write essays that schools and universities are forced to rethink how they test students. But it looks like the window for students to have ChatGPT do their homework is already closing. A new website called GPTZero just launched, and it can detect AI-generated content. Try it for yourself and see if you can fool it.

But what are the broader implications of unleashing a tool as powerful as ChatGPT on the world? Raoul Pal has a hunch that is worth considering.

The last point on the cost of expertise dropping to zero is what resonated with me. The current version of ChatGPT may not be perfect, but it can already do so much: Summarizing documents, explaining the state of research in any domain, giving medical or legal advice, writing fiction, poetry, etc. Your imagination is the limit.

It used to take a decade and hundreds of thousands of dollars to train doctors. What if every doctor now had an AI assistant? What about hospitals in remote areas that are lacking certain specialists? AI could fill the gap. But then, do we need as many people with white-collar jobs as we have now?

New technologies have always led to the destruction of jobs, but new jobs were also always also being created. Perhaps it will also be the case with AI. But this time, it seems the world may not have much time to adapt, and the transition may prove painful if too many jobs are made redundant by AI in a short period of time.

On the one hand, it’s going to make the cost of many services drop to zero or close to zero. With ChatGPT, we already have an intern working for us 24/7 that can do any tasks we ask it to do. Most of the time, you need to tweak what is produced, but it still saves you a considerable amount of time. On the other hand, it may put many people out of a job. If it’s the case, what do you do with people whose skills are no longer needed by society? Do all roads lead to Universal Basic Income (UBI)? Topic for another newsletter…

Is Adani the New FTX?

The big scandal rocking the financial world over the past two weeks is coming from India. Short-selling firm Hindenburg Research published a 100+ pages long report that summarized a two-year investigation into one of India’s largest conglomerates: The Adani Group. Its CEO and founder was until recently in the top 5 of the richest people in the world. But this was before Hindenburg Research.

Hindenburg Research is an investment firm that bets on the share price of its targets to go down, not up. So, they are not a neutral party since they stand to benefit enormously if the share price of its targets drops.

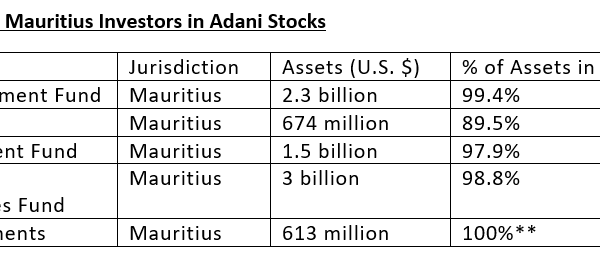

So, what does Hindenburg Research accuse Adani of? You can read the (long) thread summarizing their report and the full report by clicking on the tweet below. Long story short: Adani seems to have set up a lot of shell companies in tax heavens to pump up the price of its stocks.

Or you can use a service like Bearly AI to summarize it for you.

Here is the summary of the 100+ pages long report prepared by Bearly AI:

This article reveals the findings of a two-year investigation into the Adani Group, a large Indian conglomerate, which suggests that the group has engaged in stock manipulation and accounting fraud.

Key takeaways:

Adani Group's 7 key listed companies have spiked an average of 819% in the past 3 years.

5 of 7 key listed companies have reported ‘current ratios’ below 1, indicating near-term liquidity pressure.

The Adani Group has previously been the focus of 4 major government fraud investigations which have alleged money laundering, theft of taxpayer funds and corruption.

Counter arguments:

Adani Group's financials may be taken at face value, which would indicate that the group's 7 key listed companies have 85% downside purely on a fundamental basis.

The Adani family members have been accused of cooperating to create offshore shell entities in tax-haven jurisdictions, but the allegations have not been proven.

Question: What is the one thing you shouldn’t say when your company is in trouble? That everything is fine and dismiss the attacks as baseless... Because then it sounds suspicious.

Watch Gautam Adani, Chairman and Founder of Adani Group, defend his group in this short video and decide for yourself if you believe him. Given how much the stock prices of its companies dropped after this video, it looks like he didn’t convince his investors…

Concept of the Week

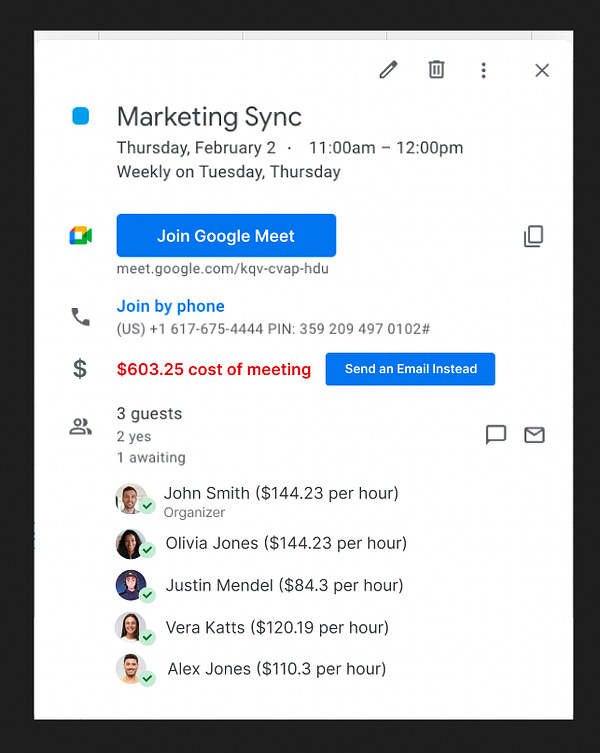

Do you spend too much time in meetings? Do you have this feeling that most meetings you join could have been replaced by an email exchange? Then you will like the concept presented below.

In this tweet that went viral and that has been viewed by more than 12 million people, gaut suggests showing the cost of the meeting by displaying the cost per hour of the participants (I would suggest rounding the numbers to avoid people reverse engineering their colleagues’ salaries). I found this concept brilliant. It would force people to think twice before scheduling a meeting, especially if the cost were recorded somewhere and you or your manager received a recap at the end of the month.